"little brother" IoT

Move Code to Data

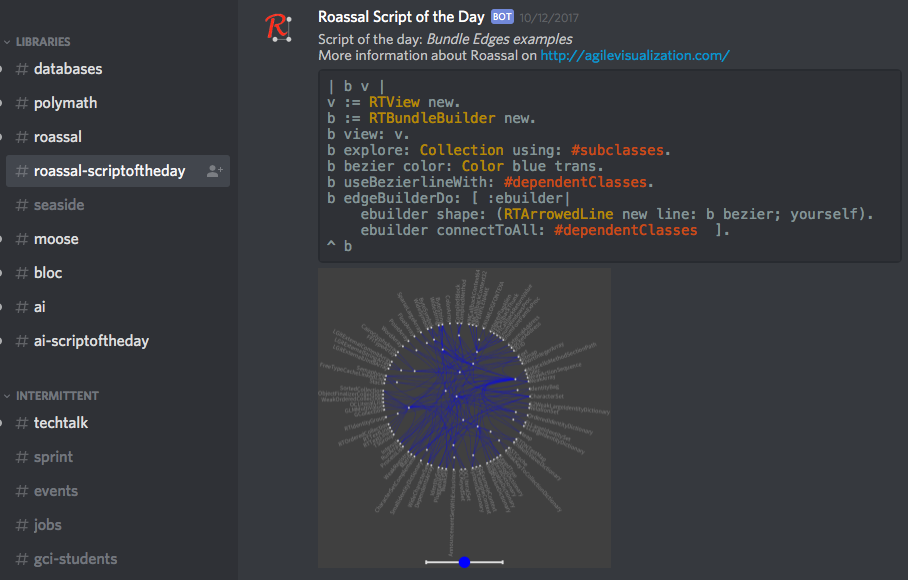

'little brother'11/23/2017 The core notion behind “little brother” is to overcome the inevitable lag between the increase in data volume and increases in available bandwidth and centralized processing power. The insight that drives it is that data is arbitrarily large, grows exponentially, and it is therefore both difficult to move and to analyze, no matter how much initial central computing power you throw at the problem. Moving data and analyzing it centrally also introduces complexity in terms of security of private data both while transferring and while analyzing it. The notion of “Big Brother” from Orwell’s 1984 rests on the assumption that all data could be both centralized and analyzed with sufficient depth that the posited centralized authority would be essentially omniscient. The name of this initiative plays off that, since it doesn’t appear to be inherently possible due to the exponential growth of such data. Maintaining and analyzing data locally is far more efficient, and allows the same overall scope of analysis without the same issues. As a result, “little brother” is a true ‘Internet of Things’ architecture, rather than the ‘CompuServe of Things’ architectures that have been offered by the major vendors. The fastest way to move data of any size is to not move it. Not moving it also eliminates issues around security including public and individual concerns over data ownership, since the data stays with the collector, which can be any individual or group that can afford a Raspberry PI, an inexpensive tablet or netbook, and a cloud microcontainer. It also solves the issue of data growth without corresponding equivalent growth in processing power, since the addition of further data collectors inherently adds further analysis power. The difficulty is having appropriate analysis code where the data already is, capable of performing reasonably fast, complex analyses on that data in the same environment as the collector. “little brother” solves that issue by using a two-container system that together analyze the actual data and store data with all identifying information removed correlated to the analysis code used to analyze it. The code is kept in online repositories, either GIT or Nexus based, and a distributed query that runs across the microcontainers on metadata and into the local container with cleaned data, to find the most appropriate analysis code available and pull it into the running containers where the query originated for use on the new data. The analysis code can be modified via a tablet based UI and immediately tested on the data to view the results. There is no need for any compile / build cycle, since all the code in the environment is live object code. Script snippets etc. can also be shared via Discord chat. The analysis code itself is based on an agile visualization engine, and a UI toolkit developed specifically for crafting custom analyses. It can be used to write a custom analysis in < 15 minutes, once the user becomes familiar with the scripting language. Whether an initial query is scripted, or a perspective simply chosen, the panels slide across horizontally, displaying the data you queried from and the results. The results can then be queried further, at any point a visualization can be created to view the data in a more immediately meaningful way. The data and analysis can be linked with textual information, hyperlinks and other needed artifacts to create reports, journalism and other types of aggregated artifacts in order to be able to communicate a problem with all relevant context, exporting it to common formats such as PDF or LaTEX, or serving it as auto-generated web pages via the built-in web server. It is of course designed with engineers in mind, but not intended only for software engineers, since both the models created from sensor data and the scripting language are easy enough for someone who doesn’t write software for a living to understand and add to their technical arsenal in a short time. Each part of the model can be inspected to get the names of fields and methods needed for the scripts, and any of the source can be viewed to see how it works and modified if necessary, modifications to any of the code take effect the moment the code is saved. The visualization types are available for view in the system in a gallery showing what each type can do, and the code for each example is also there, showing how to use the different visualization capabilities in various situations. The entire system code is in the environment as source, with the exception of the small bootstrapper, including the VM, language, interpreter and JIT compiler, as well as the source to the frameworks being utilized. This also provides the kind of transparency necessary in data journalism / data activism, where the source data and methodologies may need to be validated by a third party. The initial target for the system is in areas with aging infrastructure a lack of significant funding for large scale analysis of the infrastructure. By placing cheap Raspberry PI devices with the appropriate sensors, collecting data which is then built into a model by the system, and finding the best available analysis code as a starting point, infrastructure engineers can look for the beginnings of problems before they become catastrophic. A prime example of such an area is the concentration of ‘high rise slums’ in Hong Kong, many of which are not dissimilar to the now demolished Kowloon Walled City. Lack of maintenance and the sheer size of the infrastructure involved is creating potentially catastrophic situations in Hong Kong and in numerous other cities worldwide, particularly those with significant slum populations and high population densities. An article on the situation, which affects ~3 million people in Hong Kong alone, one of many locales with such areas, is below: https://www.thestar.com/life/2017/05/10/hong-kongs-shoebox-homes-slums-in-the-sky-pose-a-challenge-for-new-leader.html The lack of sufficient funding currently to even understand the scope of the problem implies that the system must run on the cheapest available hardware, with the least necessary bandwidth, and that the cloud microcontainers for distributed queries are truly microcontainers and are therefore either available in free tiers or very close to free. Individual microcontainers with 1 vCPU, 512 RAM and a standard virtual network connection can run a common distribution of Linux (Debian, Ubuntu, Kubuntu, Mint) with sufficient speed to be able to return millions of rows of initial data in response to a query, narrow that down to the best candidate analysis code across nodes, and return that code to the local Raspberry PI in under a minute. Since the distributed query network self-organizes and self-maintains, no additional work is needed beyond simply deploying nodes, a node will be made available with the system as a deployable container to any cloud service, although I personally use Joyent (now owned by Samsung), since Joyent doesn’t reduce network performance on microcontainers as Amazon, Google and others do. The target price point is to be able to distribute the Raspberry PI, sensors, and a tablet or netbook with the requisites to customize the analysis for ~$100-$150, while the microcontainer will run on available cloud services for less than $2/month. The GIT and Nexus repositories needed are publicly available at no cost. It should therefore be possible to make the system available to areas that need the local scope of the problem to be analyzed, have capable people, but little in the way of funding. Once analysis code has been tailored and the analysis completed, the tailored / expanded / changed scripts are correlated to cleaned data and pushed back to one of the available repositories. Data with patterns that are similar to the cleaned data will discover the more appropriate, tailored analysis. Thus, the system’s capabilities grow as analyses are done, just as the total available computing power grows as data collection grows. As a real-world example of the capabilities of the base analysis engine and associated tools, the epidemiology program Kendrick was written with similar base tooling in less than a month by one developer. Kendrick solved the epidemiology of the spread pattern of the Ebola virus, after Google had spent millions on big data analytics and failed to produce relevant results. Big data is good at finding patterns, but hopeless at finding the exceptions to common patterns that most often indicate a problem beginning to manifest. The heading picture displays some of the basic tooling. The notebook facility in the first image allows the user to connect WYSIWYG text with hyperlinks etc. to source data and analyses, and create ‘data stories’ that can be exported to PDF or HTML, or served publicly via the built-in web server with no manual writing of web pages. This allows not only the analysis to be accomplished, but to then be used for data journalism, data activism and other things that might be necessary in such situations, since while the analysis may be accomplished inexpensively, problems discovered may require much more serious funding to fix. While the core Raspberry PI code is currently in test, I’m currently using the analysis and data story toolkit on publicly available data to present a cogent case of the scope of the problem, and the need for a solution that at least begins the process of eventually fixing “technical debt” that could prove far more harmful to far more people than the kind software developers are more accustomed to.

0 Comments

Leave a Reply.AuthorAndrew Glynn, Owner, architect and developer, philosopher, and soccer fan. ArchivesCategories |

RSS Feed

RSS Feed